VOICE AI IN INDIA | LANGUAGE BENCHMARK | APRIL 2026

India is the world’s most linguistically complex market for voice AI. Winning here is not a regional footnote. It is proof that the technology can be adapted to handle the demands of one of the most complex multilingual markets on earth.

No Market Tests Voice AI Like India

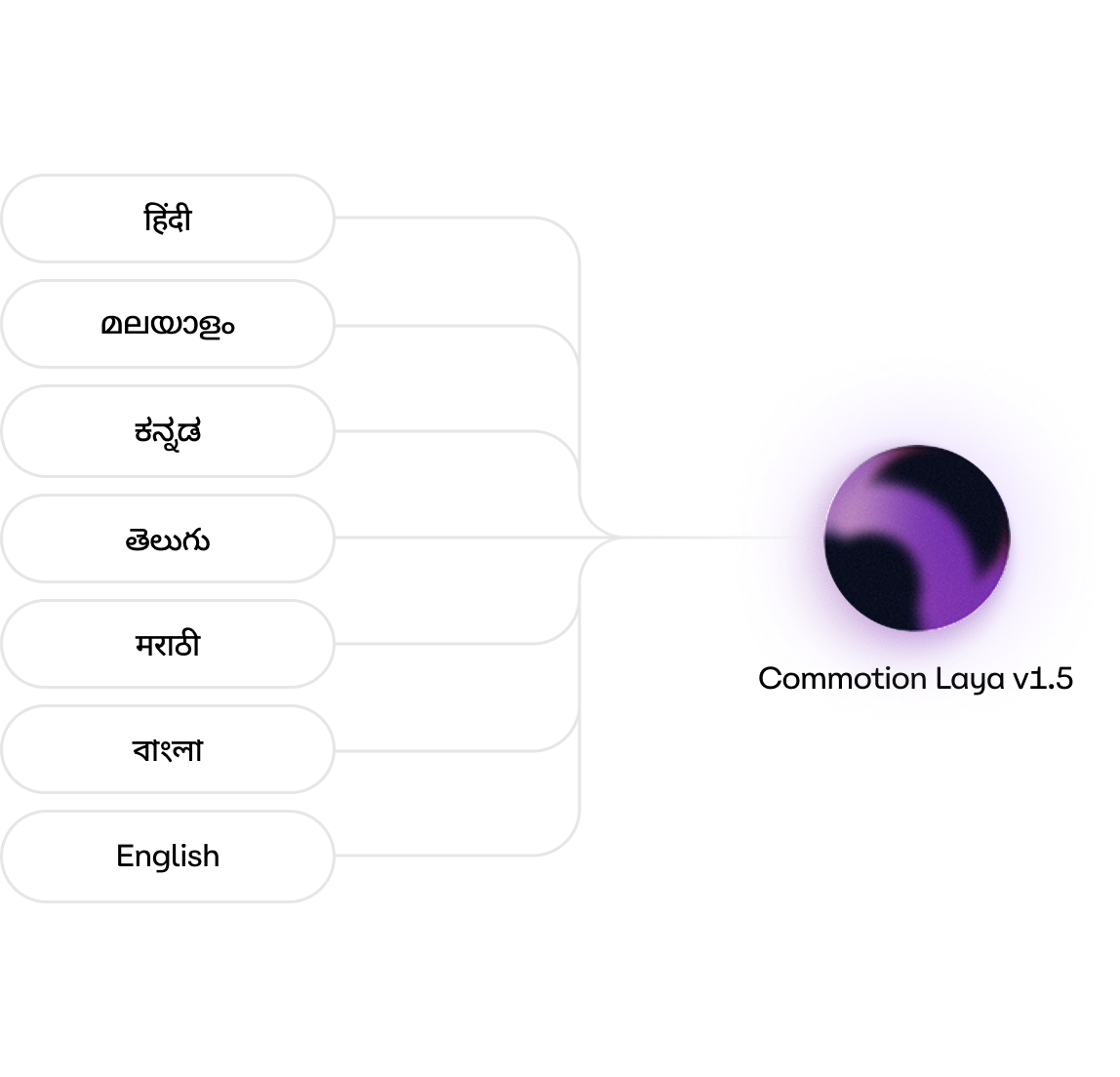

India has 22 constitutionally recognized languages, hundreds of distinct dialects, and a population of 1.4 billion people speaking different languages. For a voice AI system operating in Indian customer support, the task is not simply to pronounce words correctly in one language. It has to do so fluently across Hindi, Tamil, Malayalam, Kannada, Telugu, Marathi, Bangla, and English, often within the same call, as agents and customers code-switch between languages mid-conversation.

The linguistic distance between these languages is enormous. Hindi is Indo-Aryan. Tamil, Telugu, Kannada, and Malayalam are Dravidian. Bangla sits at the eastern edge of the subcontinent with its own phonological rules. Marathi shares roots with Hindi but diverges sharply in its spoken cadence. A Text-to-Speech (TTS) model that sounds natural in one of these languages may sound stilted, robotic, or simply incomprehensible in another.

This is the environment in which Commotion Laya v1.5 was recently benchmarked by Josh Talks Research, an independent voice AI evaluation specialist. The study, sponsored by Commotion, Inc., and conducted independently by Josh Talks Research, was not designed with India in mind as a special case. It was designed to test real-world customer support performance, and India happened to be where that test was most demanding.

The Benchmark: Blind, Human, and Grounded in Real Support Calls

The Josh Talks Research study was conducted using blind, head-to-head human comparisons. Listeners evaluated audio rendered at 8 kHz, the actual bitrate of telephony, meaning the test replicated the exact conditions under which these models would be deployed in a live call center. Speaker gender was matched across models.

Across the India-relevant portion of the evaluation, the study covered:

Prompts were built around real CX workflows covering telecom, banking, insurance, healthcare, e-commerce, and logistics, grounded in specific resolution paths rather than generic phrases. The evaluation was designed, in the words of the researchers, to test “not just informational correctness, but whether the model sounded human, considerate, and emotionally aware when it mattered most.”

The Results: Commotion Leads Across All Eight Languages

On decisive votes, Commotion Laya v1.5 was preferred over all three (3) competitors in the main telephony benchmark. When ties are excluded and only vote-based results are used, Commotion’s lead is consistent across every language in the comparison. This was not a win in English only, or in Hindi alone. The preference for Commotion held across the full breadth of the tested Indian language stack.

The empathy result is particularly striking given the nature of Indian customer support calls such as insurance claims, hospitalization follow-ups, billing, etc. In India, where a distressed caller may switch from Hindi to their regional mother tongue mid-sentence while explaining a medical claim, a voice AI that sounds robotic or stumbles on pronunciation will impact customer experience and trust.

Commotion’s empathy recognition advantage over other voice AI models suggests that Commotion Laya v1.5 is not just better at producing technically accurate audio in these languages, but that it sounds genuinely appropriate in empathy-sensitive contexts. That is a much harder capability to engineer.

Why the Issue Rate Gap Explains the Win

The benchmark’s issue-tagging data sheds light on the mechanism behind Commotion’s lead. Competing models were flagged significantly more often for irregular pacing, mispronunciation, robotic delivery, and missing-word errors.

Commotion Laya v1.5’s issue rate was approximately 31% across the benchmark, and delivered more reliably and consistently, and with fewer breakdowns.

In a multilingual Indian context, delivery breakdowns carry a particular cost. A mispronounced name in Tamil or a dropped word in Malayalam is not a minor quality issue. It can render a sentence unintelligible to a native speaker. Commotion’s lower breakdown rate across these languages contributes significantly to its performance.

What This Means Beyond India: A Signal for Every Complex Market

India is the hardest test in the world for multilingual voice AI. It is not just the number of languages; it is their diversity, their phonological distance from one another, the code-switching that happens in real conversations, and the expectations of 1.4 billion speakers who can immediately hear when something sounds off.

A voice AI system that performs at this level across eight Indian languages, is demonstrating something important: that its underlying model quality is not language-specific. It is robust. It generalizes.

For global enterprises evaluating voice AI across markets, whether in Southeast Asia, the Middle East, Latin America, or Sub-Saharan Africa, this benchmark provides a meaningful proxy. Commotion’s performance indicates its suitability and adaptability to linguistically complex languages and multilingual contexts.

In a market known for its linguistic complexity, the study’s results favored Commotion.

Disclaimer: The study reported in this blog post was commissioned by Commotion, Inc. and independently conducted by Josh Talks Research. The views and conclusions expressed in this blog post represents the views of Commotion, Inc., on the report. Commotion, Inc. is not responsible or liable for the content, interpretations, methodology, or conclusions presented in the report. Click [here] to access the report dated April 12, 2026, published by Josh Talks Research to form your own views. All third-party trademarks belong to their respective owners. Source: “Call-Center CX TTS Benchmark: Commotion Laya v1.5 vs Sarvam Bulbul v3, Cartesia Sonic v3, and ElevenLabs Turbo 2.5,” Josh Talks Research, April 12, 2026.